Trench Cafe: WIP

Work in Progress: cafe on the Death Star with a great view of a particular trench.

Surely, someone else will report that terrible bug

I thought, “Surely, someone else will report these terrible bugs. I’ll just wait for a future macOS update.”

I’m still waiting.

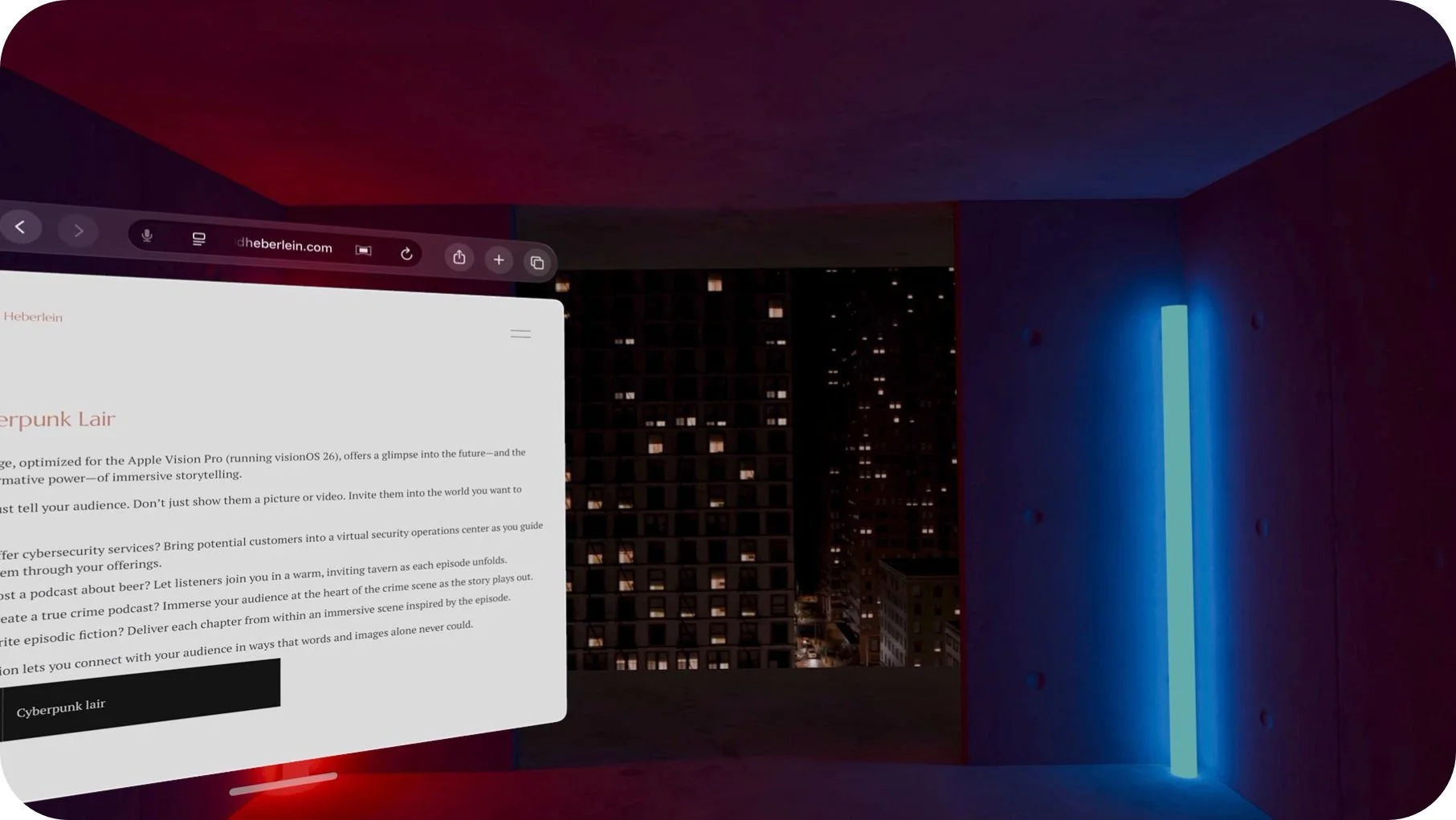

Immersive Web Pages

This page … offers a glimpse into the future—and the transformative power—of immersive storytelling.

Apollo Landings in Panoramic Photos

Panoramic photos from the Apollo missions. They look good on a computer. They look glorious in the Apple Vision Pro.

Tahoe’s Emerald Bay in Panoramic Photos

Panoramic photos of Lake Tahoe’s Emerald Bay - spectacular in the Apple Vision Pro

Apple needs to support visionOS developers with 3D assets

Apple needs to invest in visionOS assets to help developers.

Mysterious Overflights: Drone Swarms over Critical U.S. Locations

Confirmed drone swarms have been spotted over some of the United States’ most sensitive airspaces. It’s almost as if these drones are taunting the U.S. government.

Is Apple not trying to sell Apple Vision Pro?

Curiously, Apple appears to be making little effort to promote the Apple Vision Pro.

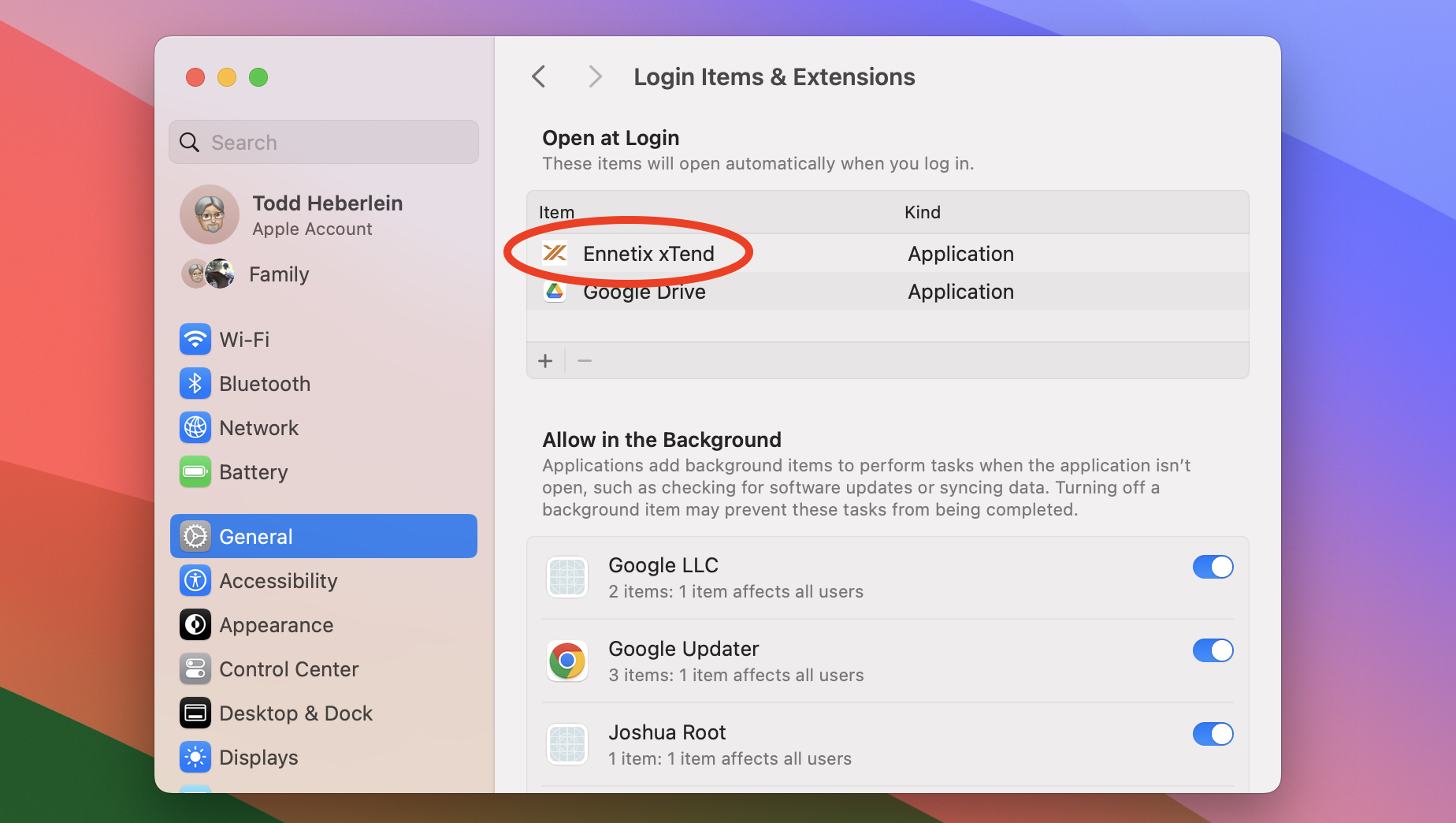

Starting Ennetix xTend at login

Setting Ennetix xTend (or any application) to start at login.